Do you know that Google doesn’t like any content issues with your website? The content issues can be duplicate, missing, or problematic title tags or meta descriptions of your website.

Though these such issues will not prevent your site from appearing in Google search result, by paying attention to these issues can provide Google with more information and even help your site getting more traffics.

One of the most new bloggers don’t aware about this is duplicate content issues. If you are using WordPress as the blogging platform, you should know about category, tag or page. By default the category, tag and page are indexed by search engine. This will result duplicate meta descriptions, titles and more.

To avoid duplicate contents in your website, you can use robots.txt file to block search engines to index specific pages. If you are using WordPress as your blogging platform, you can use the robots.txt file below to avoid content issues in your website.

# Allow Google Adsense Bot on entire site User-agent: Mediapartners-Google Disallow: # General User-agent: * Disallow: /cgi-bin Disallow: /wp-admin Disallow: /wp-includes Disallow: /wp-content/plugins Disallow: /wp-content/cache Disallow: /wp-content/themes Disallow: /wp-login.php Disallow: /about Disallow: /contact Disallow: /privacy-policy Disallow: /tag/ Disallow: /category/ Disallow: /page/ Disallow: /author/ Disallow: /images/ Disallow: /2011/ Disallow: /2012/ Disallow: /trackback Disallow: /feed Disallow: /comments Disallow: */trackback Disallow: */feed Disallow: */comments Allow: /wp-content/uploads

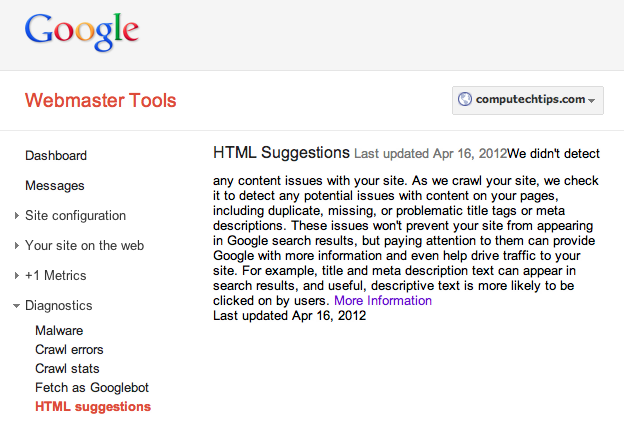

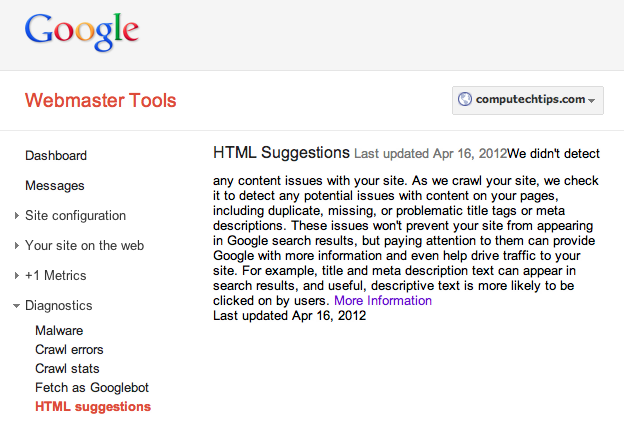

To diagnose website contents, you can use Google Webmaster Tools. In the selected website (under Google Webmaster Tools), go to Diagnostics > HTML suggestions. If there are no issue with your website, the Webmaster Tools will tell you that it didn’t detect any content issues with your site.

Thanks for this. In your example above you have tags and categories allowed. I’ve heard this causes problems. What’s your opinion on that?

nevermind, you’re disallowing it so that answers my question!

Yeah, both are already disallowed in the example above.